Hyperacusis Research was grateful for this comprehensive review of the Second International Conference on Hyperacusis which was summarized by Iver Juster, MD. The summary provides insights into vital hyperacusis topics and offers an excellent analysis of possible directions for future research.

Author: Iver Juster, MD is a Family Medicine physician who trained and practiced for many years in the U.S. Subsequently he underwent additional training and work in U.S. managed care and medical informatics[1]. His work focuses on the design and evaluation of systems to support evidence-based clinical decision-making and tools that improve patient outcomes. His desire to help a family member with hyperacusis revealed that the best way to move our research agenda forward will be to bring all parties to the table in a meaningful way.

Dr. Juster says: “I hope this summary of and thoughts about the conference’s themes will help patients, clinicians and researchers move forward together to bring about useful treatments—perhaps a cure—for this devastating condition. I take full responsibility for the selection of topics to report on and any factual errors. Opinions expressed here are my own.”

How well were the conference’s overall theme and aims met?

The conference’s theme was “public involvement in promoting hyperacusis research and clinical practice.” Its aims: (1) raise awareness about hyperacusis, (2) exchange ideas, experiences, and research outcomes on assessment and management strategies for hyperacusis, (3) discuss implications of findings from experimental studies for clinical practice, and (4) encourage involvement of patients in guiding research directions and clinical practice. Read the full ICH2 program here.

Compared with most medical conferences, ICH-2 made a stronger effort to include the voices of patients and those involved in their lives. Patients and their families were invited, and there were sessions for them to tell their stories. The sessions incorporated ample opportunity for discussion. Applause was replaced by hand-waving, and the speaker sound level was generally well attended-to. Where I see room for further public (and patient) involvement:

Compared with most medical conferences, ICH-2 made a stronger effort to include the voices of patients and those involved in their lives. Patients and their families were invited, and there were sessions for them to tell their stories. The sessions incorporated ample opportunity for discussion. Applause was replaced by hand-waving, and the speaker sound level was generally well attended-to. Where I see room for further public (and patient) involvement:

- The venue was in central London—albeit on a university campus somewhat sequestered from urban noise (but not construction on one day). Patients living in London would likely have to take the (noisy) Underground, limiting attendance to patients with mild hyperacusis.

- The high cost of travel to London may have deterred hyperacusics with modest incomes. In the future, these concerns could be addressed by offering a live webinar option (using high-quality sound streaming algorithms), as some U.S. medical conferences do. Also worth considering (with the cost of the technology coming down) might be closed-caption broadcasting or transcripts.

- Develop more formal emphasis (even an in-conference workshop) on how patients, caregivers, and the public can better understand the world-view, conceptual framework, ways of working, and needs of clinicians, academics, researchers and policy-makers. This could include specific ways in which patients, clinicians and researchers can collaborate to inform research topics and priorities, information dissemination and the validity of research findings (and applicability to specific patients)

- After-conference guided follow-up to further develop stakeholder collaboration on the research agenda—and, importantly, on understanding the current and evolving state of our understanding of hyperacusis.

Is the underlying model of the mechanism of development bottom up or top down?

There is some general agreement that the subjective experience of hyperacusis is due to abnormal “gain” or amplification of auditory information in the auditory brain. But what causes the gain? At opposite ends of the theoretical spectrum:

There is some general agreement that the subjective experience of hyperacusis is due to abnormal “gain” or amplification of auditory information in the auditory brain. But what causes the gain? At opposite ends of the theoretical spectrum:

- Bottom-up model: An injured or dysfunctional cochlea passes weak or distorted sound signals to the brain which then over-amplifies them;

- Top-down model: Sounds evoke negative thought associations because of unconscious core beliefs about oneself and the world; the mind/brain interprets these thoughts in a way that results in strong negative emotions.

While nearly all researchers (and many patients) agree that there’s an emotional component to hyperacusis, there’s less agreement about the direction of causality: Negative emotions due to the uncomfortable experience of sound coming in from a dysfunctional auditory periphery—or strong negative emotions due to thinking patterns resulting in abnormal central gain?

The latter theory was posed as forming the foundation for cognitive behavioral therapy (CBT) which—together with managed sound exposure—is a common component of hyperacusis treatment, though not always effective in many patients’ experience.

Key questions raised (though not necessarily answered):

- What is the evidence that the theory is correct? Could the direction of the causality arrow be established experimentally?

- Apart from whether the theory is correct, what is the evidence that CBT reduces the measurable and experiential components of hyperacusis (such as LDLs, tolerance for certain types of sound irrespective of their loudness, ear pain, reactive tinnitus and impact on quality of life); and what are the relative contributions of CBT and the sound therapy that often accompanies it (is it the changes in thought processes or the managed sound exposure)? For example, a randomized study (Linda Juris) found an average of 7-10 dB LDL improvement with CBT [2]; another [3] (Craig Formby) likewise found improvement in patients with hearing loss requiring hearing aids. However, both studies also involved managed sound exposure (gradual desensitization to sound-enriched environment in the former; noise generators in the latter). If cognitive appraisal-based negative emotion is the cause (rather than a consequence) of hyperacusis, wouldn’t we expect that CBT should yield a much more dramatic improvement?

- I wonder if the role of CBT to permit managed reintroduction to safe-level sound, rather than to get at deep underlying psychology. We also need to know more about what components of CBT work in what types of patients (or when it is best applied); and which components of hyperacusis tend to be improved…and for how long.

- I also wonder if it would help to move research and treatment forward if we regarded the manifestations of hyperacusis as encompassing several categories, categorized by whether or not there is pain, tinnitus (reactive or non-) and hearing loss.

The importance of anatomic, physiologic and molecular research—and the open disciplined mind:

Concurring with the Roadmap to a Cure Path B (elucidation of hyperacusis mechanisms) and presentations at the 2015 Association of Research for Otolaryngology (ARO)[4], there is growing awareness of the detailed role of cochlea and brainstem cellular metabolism and neurology in the development and maintenance of hyperacusis.[5]

As with so much of clinical medicine, disciplined basic science may soon point the way to much-improved diagnostics and treatments that are highly individualized to patients with an array of types of hyperacusis. The conference talks and readings on recent research (mainly in animal models) makes me realize that the insights we gain from studying hyperacusis are likely to have profound consequences for neuroscience and for understanding—and successfully treating—other disorders in which the central nervous system responds—often dysfunctionally—to peripheral sensory dysfunction.

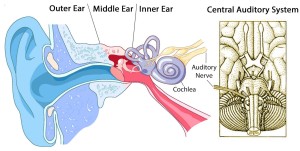

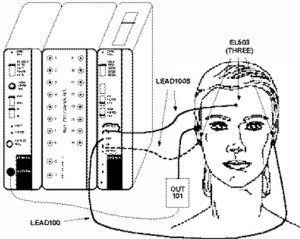

The conference’s environment juxtaposed learning about the auditory system’s anatomy and physiology along with how we measure the pathways of auditory information processing—and correlations of various expressions of functional and dysfunctional processing with the measurements. For example, a tracing of electrical activity—the auditory brainstem response (ABR)—can track the transmission and processing of a sound stimulus as it travels from the cochlea, 8th nerve, and its pathways and synapses in the brainstem. The transmission pathway’s interconnections include nerves coming down from the brain (“efferents”) that can modify the neurologic interpretation of the information as it passes upwards (in “afferents”).

In addition recent discoveries—such as those published by Marlies Knipper’s[6] group in 2015—suggest there are pain nerve fibers that send pain signals to the brain under certain conditions, possibly forming the basis of pain in hyperacusis.[7] Ear research has largely focused on the large myelinated Type I auditory nerve fibers that are responsible for sending up sound information from the cochlea’s inner hair cells (IHC). Now the role of the smaller unmyelinated Type II fibers (mainly connected to the outer hair cells—OHC) is being investigated.

Work by Charles Liberman and others is revealing that cochlear damage impairs firing of Type I cells and the cochlear nucleus becomes hyperexcitable—a possible mechanism for pain in hyperacusis. Type II fibers may start to fire when hair cells are damaged (principally OHC?) causing the experience of pain. An open question is whether Type II fibers would fire at lesser stimulus rates once someone has acquired hyperacusis (though as Kaltenbach has found, it may be that OHC loss triggers dorsal cochlear nucleus hyperactivity).

This research—still in early days—is welcomed by people with pain hyperacusis for whom reactive pain limits their ability to use sound generators or sound therapy. Knipper opined that if we can identify the ‘target’ for these pain-transmitting nerves we may be able to use pain management technologies (perhaps even those already developed—such as drugs that affect the function of neurotransmitters, electrical or magnetic stimulation, mind/brain management, or stereotactic surgery) to ameliorate the (apparently) inappropriate biologic pain response to sound. Along with this investigation of the detailed anatomy and physiology of sound processing in hyperacusis is rapid growth in our ability to explore the molecular basis of variations in the ascending pathways and connections with the limbic system. Many discussions returned to the ABR’s components in great detail. Marlies Knipper in particular called for greater use of ABR in the clinic, aiming to better diagnose patients’ hyperacusis subtype and electrophysiology picture. We can wonder what advancements in diagnosis and personalized treatment (and treatment monitoring) may arise from widespread collection of this type of data!

The need to promote evidence-based practice, patients’ understanding of ‘evidence,’ and informed, shared decision-making

This was the subject of Deborah Vickers’[8] pre-conference talk and potentially very useful through the conference to help listeners evaluate how confident we are entitled to be about diagnosis, evaluation, treatment and treatment monitoring.

We learned about the importance of understanding the research question to evaluating the results of a study. In practice, we may have to apply the findings of studies done on research questions or on patient populations that are different from the patient in front of us and we must make judgment calls about how important those differences are likely to be.

Equally important is that patients understand the nature of evidence and how to evaluate what they hear and read about their conditions. They must learn about the hierarchy of evidence (how confident can we be about the results of a study?) and the external validity of a study (how confident can I be that the results of this research apply to my situation?).

Clinicians must understand how to locate and evaluate evidence related to their clinical questions but they will rarely have time, resources and expertise to do this for particular questions. Usually they must rely on summaries, syntheses and structured reviews of the evidence around particular topics. Even then, clinicians must know where to look and how to know whether what they’re reading is relevant to the question at hand (computerized tools embedded in or alongside the clinical record are being deployed in clinical medicine). Perhaps in developing an agenda and useful tools that empower patients and clinicians (and help them prioritize research) we could be guided by these questions:

- How do we help clinicians stay up to date with the most current evidence, with an understanding of how the findings of studies relates to particular patients?

- How do we help patients seek the highest-quality information relevant to their situation?

- How do we help patients ask questions of clinicians in a way that support partnership and shared, satisfying decision making?

- How do we easily catalog and prioritize the gaps in our knowledge?

In summary, patients expect clinicians to thoughtfully diagnose, treat, and monitor their progress based on the highest quality evidence that applies to their clinical circumstances, situation and preferences. Some expect to take part in decisions about diagnosis and treatment; others are more inclined to believe the clinician has weighed the applicable alternatives and their pros and cons and therefore accept their recommendations with less questioning. Clinicians, too, tend to engage in pattern thinking and without constant vigilance miss cues about the patient’s unique history, current state and their desire for informed shared decision-making.

In the reality of daily practice, they will not always know how current is their internal “evidence base” or of the soundness of the evidence on which they are basing their recommendations. In fact, due to the rising use of Internet resources and social media, the patient may be more up to date on their condition than the clinician. But patients are usually even less knowledgeable about what constitutes high quality evidence relevant to their particular circumstances. This is an opportunity to promote lifelong clinician education, computerized clinical decision support, knowing how to read the medical literature, and evidence-based shared decision-making supported by technology.

Public policy needs to be based on evidence and shared understanding

As noted, the voice of the patient was moderately present throughout the conference, with pointers to specific directions for greater inclusion. In a sense, the way patients were (and were not) included exemplifies the current drawbacks around informing policy and prioritizing research: Only patients with mild hyperacusis and who lived in London (or could afford and were able to travel) could attend; all others will have to learn second- or third-hand about the conference from written summaries and discussion boards. In parallel, public opinion is “heard” based on who can speak, their perceived relative importance and how loud their voice is (considerations of numbers, money and power).

How do we ensure the “voices” are fairly represented in the conversation—and equally important, that they are understood and that they understand the voices of others? ICH-2 took a public stand on the importance of having the conversation—an important step forward. I recommend we use this or a similar platform to formally plan next steps, based on the gamut of stakeholder representation and a method of ensuring the perspectives are known and understood.

Imagine patients, those who are in their lives, academics, researchers, clinicians and policy-makers seated around a table. The conversation starts out with each stakeholder saying “What I wish you understood about me is…” and asking the others “What do you wish I understood about you?” With skillful moderating we could from these mutual understandings develop and prioritize a research (and education) agenda that everyone could stand behind.

John Drever[9] from the Unit for Sound Practice Research, Goldsmiths, University of London, illustrated the intersection of research and policy with a talk about the noise effects from ultra-rapid hand dryers in public toilets. These dryers (such as the Dyson Blade) are much louder than older heat-fan dryers because they produce a thin stream of air traveling at 400 mph. He showed the British standards for sound in public places—far exceeded by these types of machines, even when carried in anechoic chambers—not typical public environments. In toilets the noise exposure will be greater due to reverberation and distortion. Of concern, some populations are particularly vulnerable to noise injury (e.g. fetuses, infants and children; elderly; certain chronic diseases; visually impaired; hearing aid users; and people with hyperacusis). He quoted from several people’s experience. However, the manufacturer says that “people care more about the machine’s cleaning performance than they do about the noise.” Prof. Drever concluded that there’s a need for a large-scale project to assess the noise impact including surveying the full range of users; product testing in the field; standardized information given on products’ loudness and frequency content; state-of-the-art standardized installation informed by an understanding of acoustics; and that sound engineers work together with sound designers.

Personalized medicine and the need to gear treatment to type of hyperacusis

Our increasingly detailed understanding of role genes play in health, coupled with the exponentially decreasing cost of whole-genome sequencing, has given us an unprecedented ability to personalize diagnosis and treatment. In addition to the role of genes is a growing understanding of the roles of diet, physical activity, how we respond to stressors, learning, and many facets of the social and physical environment (“the environtome”). Together with the emerging ability of computers to learn and guide complex decision-making, we are poised to enter a world in which patients and clinicians can work together to custom-design treatments with great precision.

As with many clinical conditions we are likely to find that there are several types of hyperacusis—with or without pain; with or without tinnitus (reactive to sound, other stimuli or apparently chronic), and so on. While some researchers and clinicians recognize these types, we’re only beginning to ask about them and take types into account when doing and reporting research or developing customized treatments for patients.

This is a perfect opportunity to embody a patient (person)-centered approach, to seek to understand and personalize treatment to the patient rather than only the diagnosis. To do this requires disciplined open-mindedness to get at the patient’s experience as it evolves over time, the ability to seek and apply evidence, and that treatment is an informed partnership.

This is much easier to say than to implement—but the expressed perspective of the conference (aiming towards mutual understanding and collaboration among patients, clinicians, academics, researchers and policymakers) is a firm step in this direction. What can we do now to further it?

An example of furthering the kind of understanding of the patient’s experience that can be used in research and potentially drive therapeutics is Hyperacusis Research’s distribution of the CoRDS Registry hyperacusis questionnaire to audiologists and people with hyperacusis. CoRDS (Coordination of Rare Diseases at Sanford) maintains registries of patients with certain conditions such as hyperacusis. The Hyperacusis questionnaire documents details of the patient’s hyperacusis history, evaluation, treatments (and results) and—importantly—portrays a detailed picture of the condition’s impact on many aspects of the patient’s life.

What are the proper roles of subjective and objective testing in diagnosing hyperacusis?

Opening the conference, Hashir Aazh [10] offered a practical, person-centered definition of hyperacusis. A term used to describe the experience of intolerance to ordinary sounds in a way that they cause significant distress and impairment in the sufferer’s social, occupational and personal domains. A discussion around the condition’s impact on quality of life followed: isolation, career (especially if it involved noise), social functioning and travel.

What constitutes ‘ordinary sound’ is subjective, though it may be useful to say that it’s the spectrum of sounds that if one becomes intolerant to would substantially disrupt a person’s life. Beyond that, we need reliable and repeatable measures on which we can base diagnosis, treatment planning and monitoring and research—but which reflect the complexity of patients’ experience.

fMRI data was presented that may one day point to diagnostic criteria and even help characterize and monitor treatment but today the closest we have to a metric useful in clinical practice is the loudness discomfort level (LDL) also known as the uncomfortable loudness level (ULL). Some investigators also measure the maximum comfort level (MCL)—the loudest pure tone (at various frequencies) that the listener says is comfortable. LDLs are measured at several pure-tone frequencies under controlled conditions and have been shown to be relatively repeatable over time.

However, they are not objective measures (like blood cholesterol for example), are tested in a specially designed environment (soundproof room) and do not get at a common problem—intolerance to softer sounds with certain timbral qualities such as electrical equipment or hammering. LDL testing does not distinguish between discomfort and actual pain.

Research should focus on the value of objective testing (e.g. ABR and MLR—fMRI is too loud for most people with hyperacusis); research on the neurophysiology of hyperacusis such as that presented by Marlies Knipper may bear fruit in this arena. Standardized sound-timbre testing and the correlation of improvements in LDLs with the patient’s quality of life. For example, if LDLs improve to near normal levels, how does that correlate with tolerance to certain sound-timbres, reactive tinnitus and reactive ear pain? If the patient’s LDLs reach 90 dB, can they attend a movie or concert with no or minimal sound protection? How does improvement in LDLs correlate with the often-reported experience of sound fatigue, in which the patient starts out quite comfortable with the sound level but their tolerance decays with time? Reflecting the theme of this conference, this is a topic ripe for collaboration among patients, clinicians and researchers.

Hyperacusis and autism: An entry point to learn about genomics and hearing disorders?

Audiologist Isabella Pereira[11] reviewed the literature on hyperacusis and autism spectrum disorder which shows that hyperacusis is more common in such individuals, who have problems with auditory processing—perhaps related to ineffective signal inhibition by efferent nerves on the afferents (nerves that carry sound signals from the cochlea towards the brain).

Autism spectrum disorders are strongly suspected of having a complex genetic basis (still being unraveled); and perhaps susceptibility to hyperacusis is at least partly genetically determined as well. Pereira provided intriguing evidence that the gene CD38 encodes for a part of the oxytocin signaling pathway and that some variations (‘single nucleotide polymorphisms’) in this gene have been associated with low oxytocin levels in ASD. Furthermore, human studies have shown that administration of oxytocin improves autistics’ recognition of emotion, promotes social behavior and improves auditory processing of social stimuli.

With the cost of genome sequencing already under $1000 and plummeting, we may be on the verge of developing a deep and actionable understanding of the genetics of hyperacusis and the complexities of auditory processing.

Misophonia is not hyperacusis: An ingenious study

It’s important to distinguish hyperacusis from misophonia and recruitment[12]; these diagnoses are sometimes confused to the detriment of the patient. For example if a patient with hyperacusis is misdiagnosed as having recruitment they won’t be advised to protect their ears against loud sounds that might cause their hyperacusis to progress (or at a minimum make them very uncomfortable) and be offered useless treatments.

Misophonia refers to strong negative reactions (including anger) to specific trigger sounds (e.g. certain consonants like p, k or s; chewing or whistling). Unlike hyperacusis, misophonia does not involve general sound intolerance. Some experts postulate a psychological basis such as not being allowed to leave the table when adults were chewing loudly (and perhaps being ridiculed for wanting to).

But unlike PTSD there is not a strong obvious traumatic event. (Comment: When only some people psychologically and neurologically ‘interpret’ fairly common events to have such potent lifelong impact, I suspect a strong genetic component). Unlike obsessive-compulsive disorder, misophonia does not necessarily involve compulsive behavior, though studies show that many people with misophonia also have OCD. And misophonia is also not phonophobia, where anxiety (rather than anger) is the dominant emotion.

Damiann Denys[13] pointed out that distinguishing among hyperacusis, misophonia, phonophobia and social disorder involving sound will permit more effective research and treatment—and certainly patients with these conditions will have the experience of being understood!

Relevant to our paradigms for doing research to distinguish among the many auditory system dysfunctions, he investigated whether misophonia involves dysfunction in early auditory processing by examining differences in specific components of auditory evoked potentials. He found evidence of orientation towards new sounds that were not affected by the subject’s attention. He then had subjects watch a silent movie and played tones in a pattern interrupted at random intervals by an “oddball” misophonia-triggering sound below the level of conscious awareness. People with misophonia responded differently in evoked potential testing than those without. When the subjects and controls were looked at with fMRI, the subjects (with misophonia) showed increased activity in deep parts of the brain that regulate unconscious biological functions and are associated with regulation of arousal and emotional responses.

Comment: Taken together, many presentations illustrated the value of and need for clear definitions and appropriate distinctions among the conditions that occupy the wide spectrum of auditory processing disorders. Understanding the functions of the train of auditory processing and neurologic behavior that underlie the distinctions (and preferably the genetics) can only be helpful for patients and those around them.

Therapies—be they cognitive, psychological, molecular, electromagnetic, dietary, surgical, medical device or other—must be based on a detailed and actionable understanding of what today appears as a profoundly complex system of sound perception and interpretation. The many animal and human investigative techniques shown at ICH-2 (likely the tip of an iceberg) reveal our ingenuity and resourcefulness, and the potential for even more rapid progress as we learn to work together more effectively.

Low-level laser cochlear therapy: Do the findings support the ‘bottom up’ model of hyperacusis? And how do we understand and use new evidence?

A presentation on the use of low-level laser therapy (LLLT) by Eugenio Hack[14] (with a related poster from Joaquin Prosper[15]) may point research (and potentially therapy) in a new (though not exclusive) direction. Equally important are the findings’ (if validated) contribution to the discussion of the top-down/ bottom-up models of hyperacusis; and the opportunity to apply the principles of evidence-based research and practice.

Laser light is highly tuned to deliver one pure frequency with all of its waves in lockstep. This permits the laser device to focus a precise wavelength of light energy on a target such as the cochlear hair cells. Certain chemical reactions in cells can be affected by light at specific wavelengths; in the context of LLLT two specific wavelengths in the visible red and infrared are used to promote cellular energy production and to possibly increase local circulation to impaired tissue[16]. LLLT is typically used therapeutically for tendon, ligament and muscle injuries and some researchers speculate that red and infrared LLLT can promote improved function and possibly even cellular regeneration – both of great interest to patients with dysfunctional or damaged cochleae.

After offering possible explanations of why LLLT might be beneficial to patients with cochlear dysfunction, he summarized findings on a series of 60 clinic patients with varying degrees of hyperacusis. He claimed that nearly all improved to the point of no longer having hyperacusis by LDLs. A deeper analysis[17] of 57 hyperacusis patient by Drs. Prosper and Prof. Graffelman (a university statistician) found about 5 dB improvement in hearing thresholds and an average improvement in LDLs of 16 dB. While this is an impressive improvement, the detailed analysis is not consistent with complete resolution of hyperacusis (defined as LDLs of 90 or less in at least 2 of the 22 tested frequencies—11 in each ear)[18].

Still, these are impressive results. Why, then, is the world of academics and clinicians not cheering? The answer sheds light on a way forward towards achieving the mighty goals of the conference—‘mighty’ because of the potential to surface, understand and serve the interests of our stakeholders—patients (and those in their lives), clinicians, academics, researchers, policy-makers and policy-implementers.

Let’s begin with recognizing the reality that people often defend their points of view because they believe that doing so will best serve their needs. This defense can be well-reasoned, based on experimental evidence, and reflect deeply held beliefs about the way things are. Nonetheless, as Krishnamurti pointed out, “All thinking begins with conclusions.” Our thinking may have woven into it assumptions that we don’t know that we don’t know are assumptions.

If this approach to solving research and clinical problems worked, clinicians and patients would be less confused and more delighted than they are. Is there another way? Perhaps but if there is, we must find or create it together. People with very different points of view have very similar needs (being loved and appreciated, being understood, making a meaningful contribution, peace, comfort, recognition…) though they can employ quite different ways of getting them met.

A thought experiment: Now suppose our various stakeholders were sitting around a table and before discussing approaches to a task they had to convince each other that they had heard and understood each other’s points of view and themselves came to feel understood by the others (“What do you wish I understood about you?” “What I wish you understood about me”).

In this (thought-experimental) world the time would hopefully come when all stakeholders would recognize and appreciate the others’ contribution. And then perhaps we could spend more a greater proportion of our energies moving forward.

In addition, the LLLT material presents a superb example of what needs to happen from a science perspective to move towards more widespread adoption (and potentially the cheering) … or modification or rejection of a therapy: high-quality evidence. Ideally, clinical investigators would set up a double-blind placebo-controlled some patients were randomized to LLLT and others to sham treatment (non-laser red light for example)[19].

This type of study neutralizes two important problems that can arise with studies based on patients’ before-and-after (pre-post) metrics: (1) Selection bias (people who volunteer for a treatment may be dissimilar to those who don’t in a way that influences it; and (2) We don’t know what would have happened to the same people without the treatment. “What would have occurred in the absence of treatment?” is the validity basis of all studies.

However: The motivation to do more rigorous and valid studies has to come from somewhere—usually that’s pre-post clinical case series like this one. And formal randomized studies are challenging to do—patients have to be recruited, carefully screened and give informed consent to be studied, and studies must be conducted fastidiously with well-trained professionals. Such studies are costly—where will the funds come from? Many people don’t want to be randomized (therefore people who are willing to be randomized may be very different clinically from those who aren’t), and especially with surgery, sham treatment can be risky[20]. It may not be feasible to do randomized studies in such instances, yet patients have the right to know about emerging research about a disease that can shake the foundations of their lives.

But along with this right comes a responsibility—and an opportunity. Patients (meaning all of us) must become evidence-literate: They need to ask about and understand the basis for a claim about tests and treatments. They must learn how to ask their clinicians “What are my options and what are the benefits and risks of each? Why do you recommend what you are recommending? How will you know if this is working or should be modified?” As important, their clinicians must prompt them to ask such questions (or bring them up first). Equally, the clinician must seek the experience that their perceptions and rationale have been heard, and that the patient shows evidence of understanding (“can you repeat your understanding of this in your own words?”). The same sort of dynamic will serve the entire stakeholder collaboration. Using it, we are likely to discover that there is much of importance and power that we don’t know that we don’t know.

For the conference, I would have liked more about…

- -A compendium (preferably an online database) of research findings on hyperacusis (including their level of evidence), organized around the Roadmap to a Cure’s four domains (References cited in the two-part hyperacusis review published in the American Journal of Audiology is an excellent start). In particular, TRT/ sound therapy; CBT; drugs; LLLT; and status of research around cochlear regeneration. A panel on “what I think is likely to lead to a breakthrough” would have been helpful—and fun.

- Longitudinal studies on the course of hyperacusis patients treated with different modalities and classified by, e.g., the four types of hyperacusis, age, gender, and cause of hyperacusis

- Studies (or well-written case histories) on people with hyperacusis who apparently completely recovered, written in a way that supports insights into common factors for long-term recovery, apparent recovery then relapse, etc.

- More of a 101-like grounding in the electrophysiology of hearing (or the attendees could have been directed to the excellent online reference, http://www.cochlea.eu/en; or an audiology text that covers the subject in more depth, such as Audiology Diagnosis (RJ Roeser).

- The preconference day lectures about electrophysiology tantalized us with the idea that hearing disorders could be diagnosed (or at least we could gain insight) with tests such as auditory brainstem responses (ABR), middle latency responses (MLR), oto-acoustic emissions, etc. I would have liked more on this, particularly if thought to be relevant to treatment of various types of hyperacusis.

Footnotes

[1] The Health Information and Management Systems Society defines medical informatics as “the interdisciplinary study of the design, development, adoption and application of information technology-based innovations in healthcare services delivery, management and planning.” Much is missing from this technology-oriented perspective, such as the study of how clinicians, patients/families, doctors, and society make clinical decisions; and on the importance of valid methods of evaluating the effect of diagnostic tests and treatments.

[2] Juris L, Andersson G, Larsen HC, Ekselius L. Cognitive behavior therapy for hyperacusis: A randomized controlled trial. Behavior research and therapy 2014;54:30-37.

[3] Formby C, Hawley M, Sherlock LP et al. Interventions for restricted dynamic range and reduced sound tolerance: Clinical trial using a tinnitus retraining therapy protocol for hyperacusis. Proceedings of the Meetings on Acoustics, Acoustical Society of America, June 2913.

[4] https://hyperacusisresearch.org/overview-of-2015-aro-midwinter-meeting/

[5] For an excellent, well-illustrated primer on the anatomy and workings of the pathways from ear to brain, see http://www.cochlea.eu/en/cochlea.

[6] Professor, Molecular Physiology of Hearing, University of Tubingen

[7] Personal comment: I wonder if spasm of the tensor tympani—a tiny muscle that tightens to brace the eardrum and thus protect the ear from loud sound—could also be involved in pain hyperacusis.

[8] University College of London Ear Institute

[9] Professor of Acoustic Ecology, University of London (UK)

[10] (Conference organizer) Head of Tinnitus and Hyperacusis Therapy Specialist Unit, Royal Surrey County Hospital, Guildlford, UK

[11] Federal University of Minas Gerais (Brazil)

[12] Recruitment, which is often linked to hearing loss, is the experience that normal-level sound is too soft but when the volume is increased a threshold is reached beyond which the sound is perceived as being suddenly much louder. In hyperacusis, normal sound is perceived of as being not just loud but intolerably so. Hyperacusis is often present without hearing loss.

[13] Professor of Psychiatry, University of Amsterdam (Netherlands)

[14] Otolaryngology specialist, Rotger Hospital, Palma de Mallorca (Spain)

[15] Otoclinica, Madrid (Spain)

[16] This explanation of how laser light enhances cellular energy production has recently been questioned and an alternate theory proposed: Coherent light at certain frequencies may reduce friction in the interface between the cells’ energy factories (mitochondria) and the water immediately surrounding them. See Sommer AP, et al. Light effect on water viscosity: Implications for ATP biosynthesis. Nature Scientific Reports July 8, 2015. For a popular account, see Burst of light speeds up healing by turbocharging cells. New Scientist July 18, 2015

[17] Prosper J, Graffelman J. The analysis of audiometric measurements before and after low level laser therapy of Spanish patients with hyperacusis. 2013. Available from www.otoclinica.org.

[18] For example, in the left ear, 25% of patients had average LDL of 72 dB or less before treatment; 25% had average LDL of 89 or less after (these are not necessarily the same group of patients). However, the patient with the lowest average pre-treatment LDL had 52 dB; the patient with the lowest average post-treatment LDL had 70 dB.

[19] Possibly people with hyperacusis in both ears could have their ears randomized, but to the extent that the experience of hyperacusis involves central brain processing, that might not be a valid setup.

[20] For example, Dr. Silverstein published a report of 2 patients (3 ears) with hyperacusis treated with surgical reinforcement of the cochlear oval and round windows that showed promising results on LDLs, the Hyperacusis Questionnaire, and quality of life. See Silverstein H, Wu YH, Hagan S. Round and oval window reinforcement for the treatment of hyperacusis. Am J Otolaryngol 2015;36(2):158-162.

Thank you so much for your invaluable contributions to helping those with Hyperacusis. I really appreciate it.

That is a great, in-depth report. Thank you very much for taking the time to write it up and present it to us hyperacusis sufferers.

Excellent summary. Provides a clear, thoughtful summary of the conference and suggests next steps for researchers and clinicians that may help further progress with this debilitating condition.

About time this is taken seriously, the people it affects do suffer, it stops them from having any sort of normal life. I just wish more people including professionals were aware of this problem. Maybe a T.V documentary with sufferers and experts on this condition is the next step.

So where is cure? Everybody is summerizing something but no cure :((